The Latest AI News October 2025 shows we have reached a major milestone. Artificial intelligence is accelerating across the world and shaping our landscape. It is evolving rapidly through wide-ranging fields.

This month, significant developments in machine learning research and infrastructure changed everything. Technology is changing faster than ever. Science fiction is no longer the future. It is already quietly working behind the scenes in our normal life.

I have invested over 20 years watching these tools build products. They help everyday people and teams unlock benefits. These range from cinematic video generation to autonomous shopping agents.

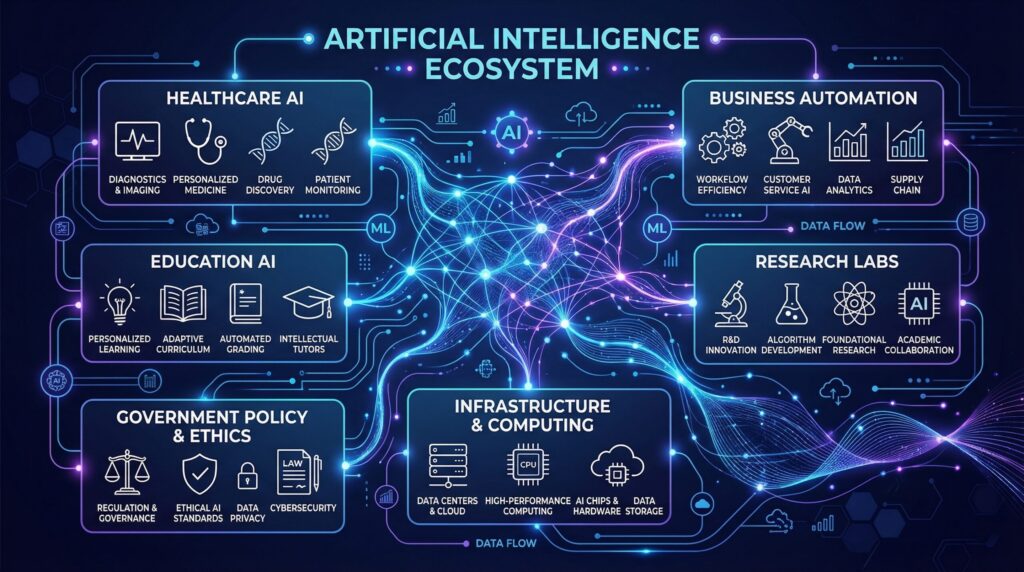

In AI-driven healthcare, doctors treat patients with better insights. This clear monthly digest provides a breakdown of important updates. Intelligence supports businesses in making decisions and improves education.

Intelligence also helps governments create safer digital laws. Whether you are a student, business owner, or curious reader, this regular roundup matters. It covers recent announcements and shows how Google keeps progress in crisis response.

These simple, friendly products improve your daily lives. Subscribe and follow to see how this milestone in October 2025 is shaping a better world.

Latest AI Technology News October 2025: Major Product and Platform Launches

OpenAI: Apps in ChatGPT, Apps SDK, and AgentKit

OpenAI announced new Apps in ChatGPT and a preview of an Apps SDK. The aim is to connect ChatGPT with services that people already use, so they can complete tasks inside one conversation instead of moving between many apps or web pages.

Early partners include Booking.com, Canva, Coursera, Expedia, Figma, Spotify, and Zillow. These partners cover common needs such as travel booking, design work, online courses, music, and property listings, and show how Apps in ChatGPT can support everyday tasks in a single place.

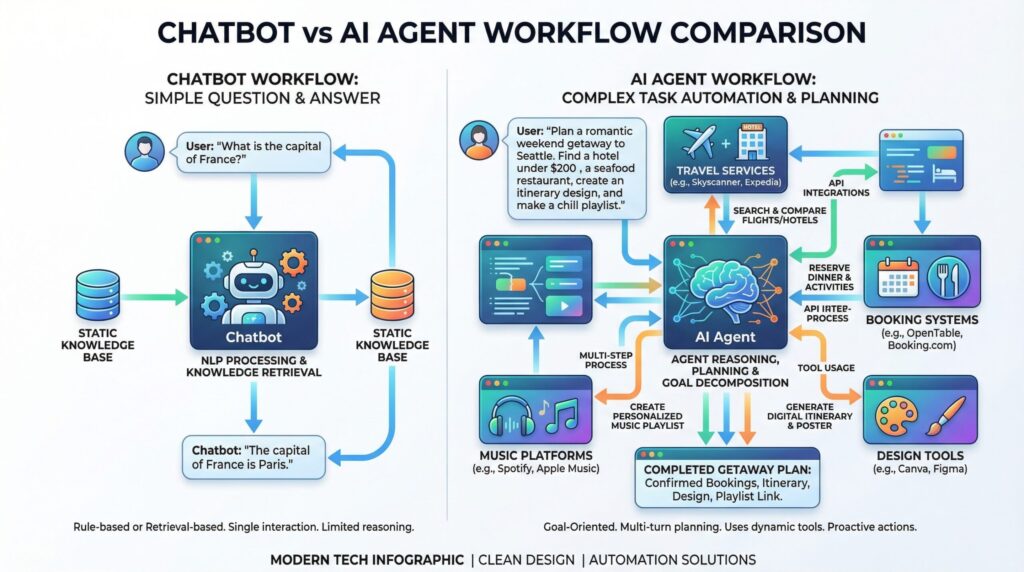

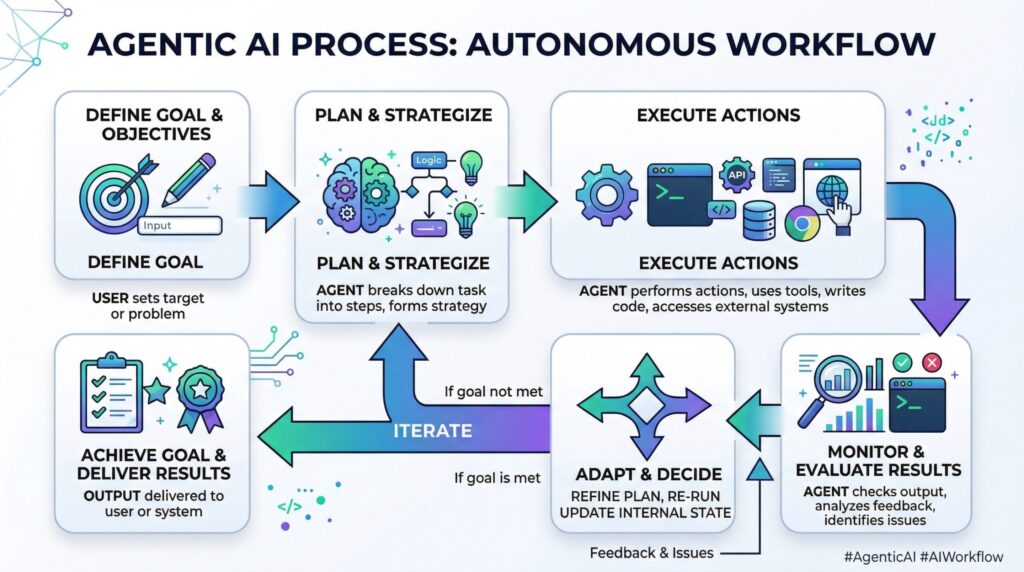

OpenAI also introduced AgentKit. It is a set of tools for teams that want to build agents. An agent can take a goal, break it into clear steps, and then carry out those steps, often by using tools or online services. This is not the same as a simple chatbot that only replies with text. An agent is designed to do work on behalf of the user, not just answer questions.

In business use, agents usually need three main types of control.

- First, permissions, so the system cannot act outside the allowed scope.

- Second, activity logs, so teams can review what the system did.

- Third, limits and checkpoints, so actions with higher risk require review or approval by a person.

These controls are important because systems that can take actions can also create real problems in operations if they make mistakes.

OpenAI: ChatGPT Atlas and AI-Native Browsing

OpenAI also introduced ChatGPT Atlas. It is a web browser with ChatGPT built into it. The key change is how browsing connects to your work. Browsing is no longer a separate task you do first and then work later. Instead, the assistant is present while you move through pages and do your tasks online. The product also included an early version of an agent mode, plus privacy controls and safety rules.

This matters because many daily tasks still happen in a browser. People use it for research, checking and comparing options, and filling online forms for work and personal tasks. When browsing and the assistant work at the same time, you can do more inside one place. There is less need to copy text by hand between tabs and tools, and common tasks can finish in fewer steps.

Lets Explore: AI News Today December 2025: Major Breakthroughs Reshape Tech Industry

Google: Home, Creation, Workplace, and Skills

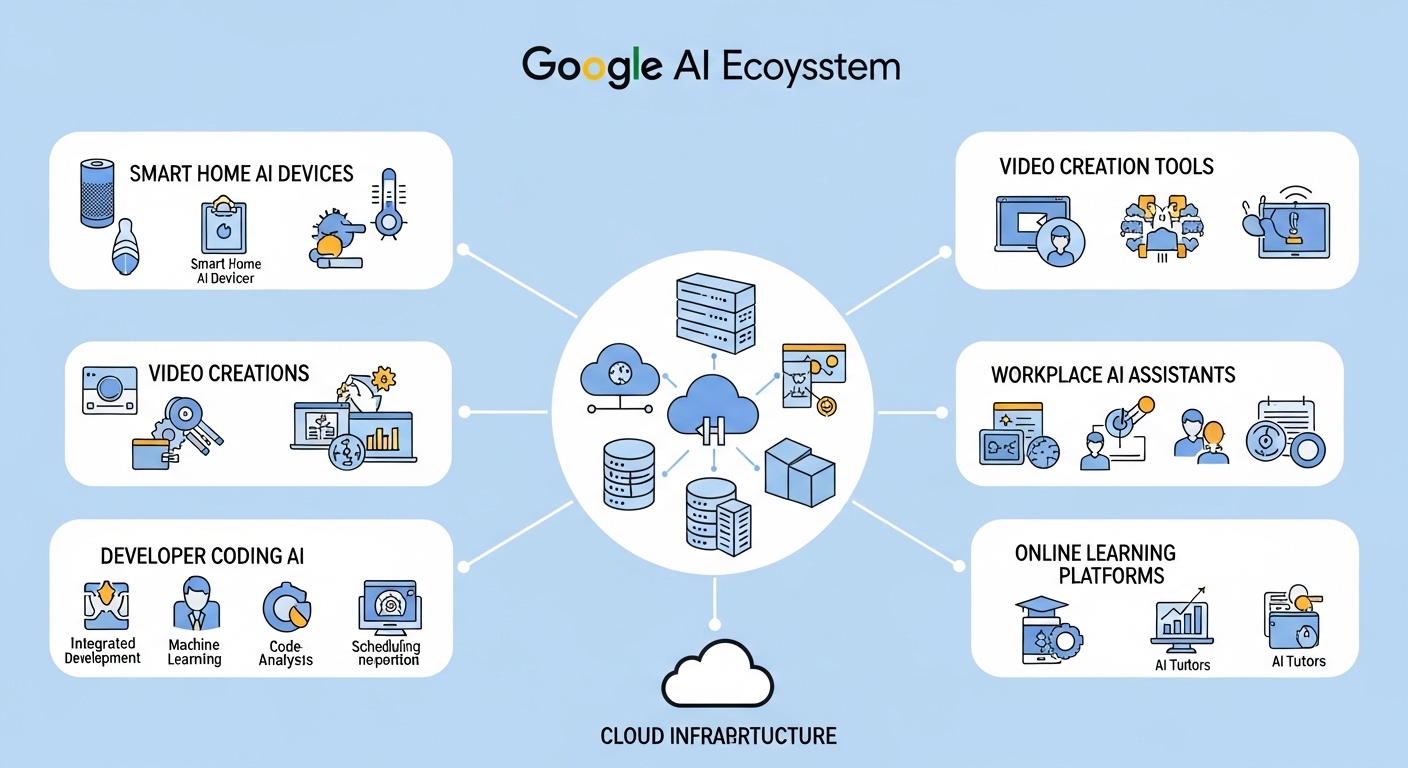

Google’s October recap shared updates for home devices, creator tools, workplace AI, and security.

For the home, Google announced Gemini for Home. It offers a more natural way to manage smart devices and replaces some older assistant-style experiences on certain speakers and displays.

For developers, Google highlighted a new coding experience in Google AI Studio called a “vibe coding” experience. You describe the kind of app you want, and Gemini links the right models and APIs to help build it. This does not replace proper engineering for live products, but it can reduce setup work and make early prototypes faster to create.

For creators, Google reported updates to Flow and Veo 3.1. The main goal is to give more control in video creation. The recap described using several images to guide characters and visual style, connecting frames smoothly between scenes, and generating audio that matches the video across different features.

For workplace use, Google introduced Gemini Enterprise as a single main entry point for AI at work. It is described as grounded in company data and designed for strong governance of AI agents. Google also mentioned anti-scam measures and AI security updates, linked to Cybersecurity Awareness Month.

On training and skills, Google launched Google Skills, a learning platform with about 3,000 courses and labs. Google also released Gemini 2.5 Computer Use in preview through the Gemini API. It is meant to support agents that can interact directly with software interfaces. This is important because many organizations want AI systems that can work with their existing software and screens, not only generate text.

Other Vendor Updates

Anthropic announced that it is increasing its compute capacity through Google Cloud TPUs. The company expects access to up to one million TPUs, with total power use well above a gigawatt planned to be available in 2026. This shows a basic point: large AI models need very large infrastructure, and these infrastructure plans influence when new products can launch.

Mistral launched Mistral AI Studio as a platform for running AI systems in production. It was described as including observability tools, an agent runtime, and an AI registry, so teams can watch their systems, run agents, and manage models in one place.

Microsoft introduced Copilot Portraits in Copilot Labs as a test for voice interaction with avatar-style interfaces. The company also expanded AI-based teaching and learning tools across Microsoft 365 Education licenses, so more schools and students can use them.

October 2025 Timeline of Key Items

| Date (Oct 2025) | What Was Announced (High Level) |

| Oct 6 | OpenAI developer announcements, including Apps in ChatGPT, Apps SDK, and AgentKit |

| Oct 7 | Google previewed Gemini 2.5 Computer Use via the Gemini API |

| Oct 15 | Google shared Cell2Sentence-Scale 27B and also posted updates to Flow and Veo 3.1 |

| Oct 16 | Google DeepMind described a collaboration with Commonwealth Fusion Systems |

| Oct 21 | OpenAI introduced ChatGPT Atlas |

| Oct 23 | Anthropic described expanded TPU access through Google Cloud |

| Oct 24 | Mistral introduced Mistral AI Studio |

Agentic AI and Computer Use Capabilities

AI agents can plan a task, take an action, check the result, and then continue with the next step. The updates in October 2025 show this shift from answering questions to getting work done.

What Changed in October 2025

Two product releases showed this direction clearly. Apps in ChatGPT linked conversations to supported services, so tasks could progress without changing to other tools. Gemini 2.5 Computer Use was released in preview as a model designed for screen-based workflows, where the system can carry out tasks by operating what appears on the screen.

Two Practical Paths to Action

- UI operation (computer use): With Gemini 2.5 Computer Use, the system can look at what is shown on the screen, take an action, and then check what changed before it continues. This matches many tasks that still run through websites and desktop apps, such as updating records, entering data, or moving through forms step by step.

- Direct service integration: With Apps in ChatGPT, the conversation connects straight to partner services. For tasks covered by these partners, this can reduce extra steps on the screen and make the process more stable and predictable, because more of the work happens through a known integration instead of many manual clicks.

Interoperability and Standards

As more tools connect to agents, MCP (Model Context Protocol) was mentioned as one part of standardization work.

Its main advantage is that it makes it easier to connect different tools and manage them in a clear way. It also makes auditing simpler, especially in large organizations where the same governance rules need to apply across many systems.

Related: Latest AI News September 2025: Shocking Breakthroughs

AI Breakthroughs October 2025 in Science and Healthcare

Cancer Research

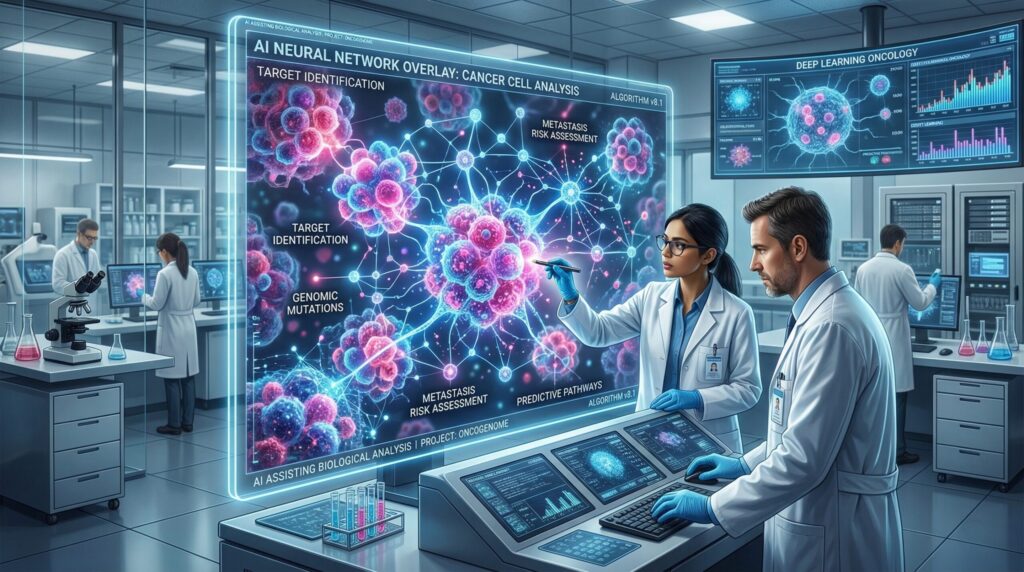

In AI in healthcare news October 2025, some of the most important updates were in cancer and genetics research. In AI model news October 2025, Google introduced Cell2Sentence-Scale 27B, a single-cell foundation model based on Gemma. Google reported that this model produced a scientific idea (a hypothesis) that was later confirmed in lab tests. The work is aimed at understanding how cells behave and at opening new options for cancer treatment, including methods related to how the immune system detects cancer cells.

Google also mentioned DeepSomatic, an open-source model designed to speed up genetic analysis in cancer research. In this context, a foundation model means a large model trained on broad data and then adapted for specific tasks. Here, the important point is the type of input. The model uses biological data about cells, not general text. This changes how the model is trained, how it is used, and how its results are checked.

Fusion Energy

In energy research, Google DeepMind and Commonwealth Fusion Systems joined to push fusion work ahead. Fusion needs tight control of plasma under extreme heat and pressure inside reactors. AI helps guide that control and speeds up how fast teams can test ideas.

It can find patterns, suggest new settings, and improve after each run. Even so, AI remains a support tool, and experts still lead the main physics and engineering work. For everyday users, these changes mean AI systems that handle more tasks, while laws and standards try to keep up with how they are used.

October 2025 AI Regulation News, Governance, and Public-Sector Policy Updates

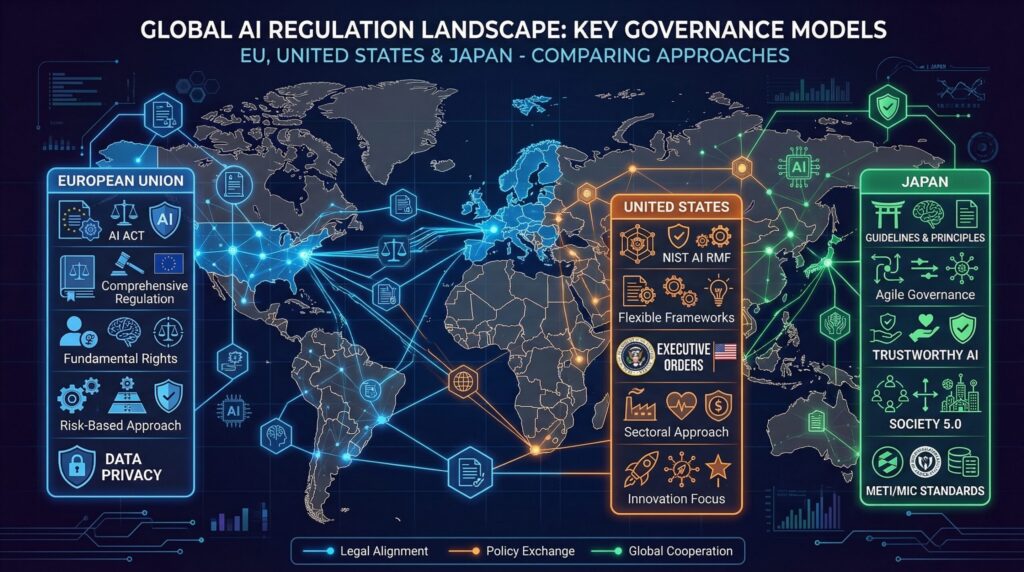

European Union

The EU AI Act moved into a compliance stage for GPAI (General Purpose AI). GPAI means broad models that can support many different tasks, instead of being built for one narrow use. The new rules focus on transparency, clear documentation, and careful risk checks, with stricter demands for systems that have very high capability or impact.

The European Commission also announced plans to support adoption and research, including programs called Apply AI and AI in Science. The main point is that documentation and safety processes are now basic requirements for AI work. They are no longer optional additions that teams can ignore or leave for later.

Japan

Japan’s recent updates showed higher expectations for AI in regulated sectors. METI revised its generative AI guidelines with a clear focus on healthcare, finance, and critical infrastructure. PMDA explained how AI-supported medical devices should be assessed. PPC stressed the importance of consent and using only the minimum necessary data under APPI.

For companies working in Japan, this means that documentation, privacy controls, and clear safety validation steps are now very important, especially for systems used in healthcare and other sensitive areas.

United States

While tools grew stronger, rules for AI also grew tighter in many regions. The EU pushed one main rule book, but the U.S. took a split path. It leaned on sector agencies and state laws instead of one shared frame.

California stood out with SB 53 and SB 243 on safety checks and AI labels. The hard part is patchy rules, which raise extra work for teams in many states. By October 2025, high risk and high power systems faced far more pressure.

Security, Misuse, and Safety Reporting

What the OpenAI Threat Report Described

OpenAI’s threat report said that, since early 2024, it has reported and disrupted more than 40 organized threat networks. It described similar patterns across many cases.

Threat groups used AI tools to improve methods they already had, rather than creating completely new methods. Some groups used more than one model in the same operation. Others changed their behavior to lower detection signals, for example by asking models to remove certain punctuation, such as long dashes.

The report also described a gray area. Many prompts can appear normal on the surface, but can support harmful activity when seen in full context.

OpenAI stated that clear, direct malicious requests were blocked. It also said it found no evidence that access to its models created new types of harmful technical capability. The main changes were faster work, better language quality, and greater scale.

Security Features Announced for Users

Google mentioned new anti-scam and AI security measures connected to Cybersecurity Awareness Month. The direction matches how AI products are changing.

As assistants carry out more actions on behalf of users, security needs to cover risks in workflows, strong permission controls, and safer default settings.

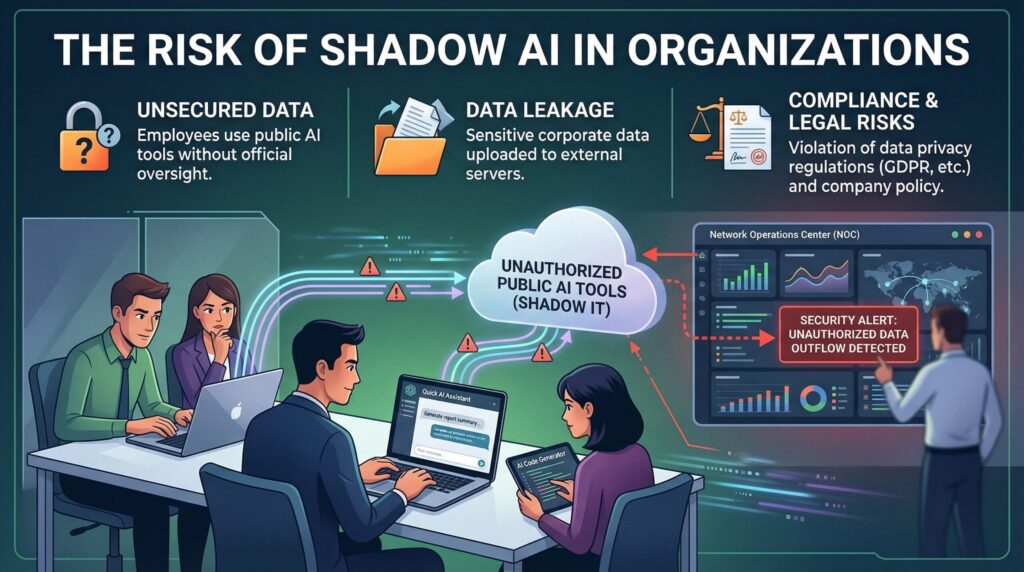

Workplace Risk: Shadow AI

Shadow AI is when employees use AI tools that are not approved by their organization and include sensitive data in those tools, often because the tools are fast and convenient.

Organizations that manage this in a strong way set clear approval rules, explain which types of data must never be shared, and provide an approved AI option that is practical enough that people will use it in their normal work.

Final Thoughts about October 2025 AI News

October 2025 showed AI moving into more practical use. Products became better at helping people complete full tasks inside apps, browsers, and workplace tools.

Research work tied AI to hard scientific problems, while rules and standards raised the bar on safety, privacy, and clear documentation. Big infrastructure and strong governance stayed important limits on how fast systems can grow.

FAQs – Latest AI News October 2025

Why did October 2025 matter for everyday AI users?

The latest AI news October 2025 was a turning point for everyday AI users. Updates across apps and browsers let people finish real tasks without leaving their tools. AI stopped being a side chat box and moved into the main workflow. These tools began to handle full tasks, not just short replies.

What is the difference between a chatbot and an agent?

A chatbot mainly answers questions with text only. An agent can take a goal, turn it into steps, and use tools or online services to complete those steps. Because agents can change real systems, such as accounts, records, and settings, they need stronger controls and careful checks.

What does computer use mean in AI systems?

Computer use means an AI system can work directly with software interfaces, such as a browser or a desktop application. The aim is to let assistants handle tasks inside existing software, not only produce text that a person then has to copy or apply by hand.

Why are compute deals discussed in gigawatts?

Training and running large models need data centre’s with very high power and cooling demand. Gigawatt numbers show the size of the power capacity behind these data centre’s, not only how many chips or servers are ordered.

What does GPAI mean in the EU AI Act discussion?

GPAI stands for General Purpose AI. It refers to broad models that can support many tasks and products. In the EU AI Act, GPAI is linked to rules on transparency, clear documentation, and careful risk management for these general models.

Comments are closed