Latest AI news September 2025 showed a clear shift in how products used AI. Many companies built AI into everyday features people already rely on. Browsers, search, writing tools, and coding tools all received major updates.

At the same time, more attention went to how these systems are built and used. Teams had to think about training data, content rights, and safety checks. For many companies, the main issue was not access to AI. The main issue was control, review, and clear ownership.

What Ai Changes in September 2025

This summary covers the recent AI news September 2025 that mattered most for products, teams, and governance.

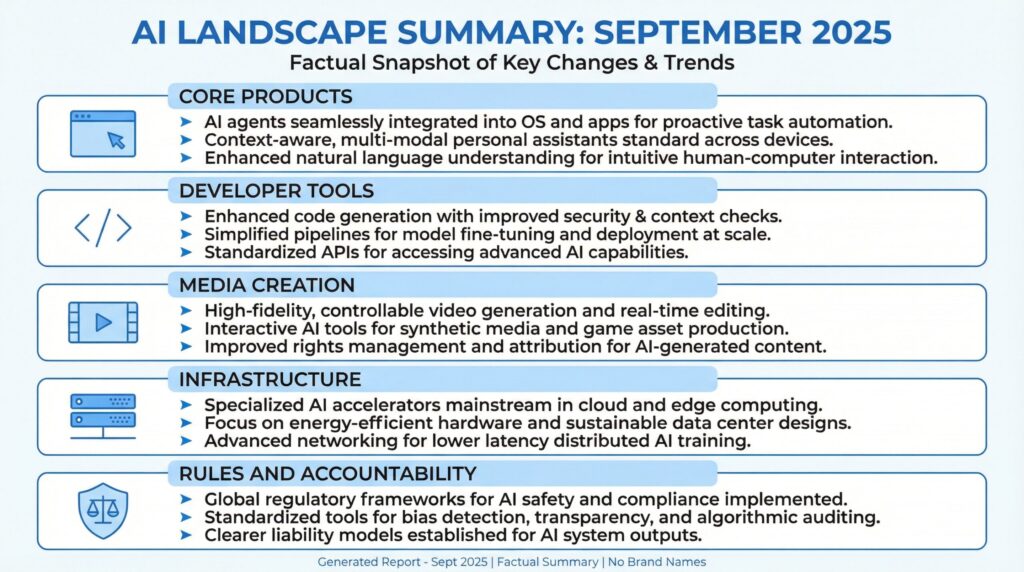

| Area | What Shifted | Why It Mattered |

| Core products | AI moved deeper into browsing, search, and writing tools | Workflows became faster and more direct |

| Developer tools | Coding support moved toward longer, multi-step tasks | Teams could use AI for real project work, not only snippets |

| Media creation | Short-form video and image tools became easier to use inside apps | AI media moved closer to everyday use |

| Infrastructure | Scaling plans were described in power and capacity terms | Electricity, sites, and build speed became real limits |

| Rules and accountability | Lawsuits, disclosures, and safety research gained momentum | More expectation to show responsible deployment |

AI Breakthroughs September 2025: The Shifts That Mattered Most

Most AI breakthroughs September 2025 came down to three simple changes:

- AI started helping people finish full tasks, not just give short replies.

- More tools could handle text, images, and sometimes voice in one flow.

- More companies needed clear rules for data use and who is responsible.

These changes shaped product design, business use, and infrastructure planning. They also changed what teams had to review before releasing new features.

AI News September 2025: Browsing and Search Updates

AI was no longer limited to a chat screen. More tools started offering AI help inside the places people already work, especially in browsers and search.

AI Features Inside Browsers

Google’s September roundup described a larger role for Gemini inside Chrome. The goal was to support browsing tasks without requiring a separate tool. That includes understanding what is on a page, working across open tabs, and helping with step-by-step tasks.

This matters because the browser is where many work tasks begin. People read documentation, compare vendors, review policies, and write notes based on what they find online. When the AI feature lives in the same space, users spend less time copying and pasting, and more time turning information into something useful.

It also changes how research happens. Instead of reading many pages and writing a summary from scratch, users can create a first draft faster and then spend their time checking it and improving it.

Search That Handles Longer Questions

Google also described updates to AI Mode in Search. The direction was toward longer, more detailed questions, with answers shown in a clearer format. Instead of treating search as keyword matching, the system is designed to interpret the full request and respond with a structured answer.

When search responds this way, the first screen changes. Users may see an AI-written response before a list of links. That can save time for common tasks, but it also creates new questions about how source material is used and how much traffic publishers still receive.

Search Live, Visual Search, and Language Expansion

Another part of the update was Search Live, which connects voice questions with what a phone camera sees. It is designed for real-time help when typing is slow or when it is hard to describe what you are seeing in text.

Google also described stronger visual search methods. When a search system can use images and natural language together, users can share what they see and ask what they need without perfect wording. This is one of the clearer examples of multimodal AI in consumer products and an Artificial Intelligence breakthrough September 2025 that people could use in daily life. The term sounds technical, but the idea is simple: real work often starts with an image, not a paragraph of text.

Google also highlighted expansion to more languages for AI Mode. Early AI search features often launched in a limited set of languages. Wider language coverage is usually a sign that the company is preparing the feature for broader public use, where language support is a basic requirement.

Related: AI News Today December 2025: Major Breakthroughs Reshape Tech Industry

AI Writing and Learning Tools Improved

September updates were not only about search. Many changes focused on writing, editing, and learning tasks that people handle every day.

On-Device Writing Help

Google described new writing tools in Android that help adjust tone, fix grammar, and polish text. Because these tools sit inside the device, people do not need to copy text into a separate app. That makes quick edits easier for work messages, notes, and short drafts.

These updates matter because they save small bits of time all day. Many people do not want a long chat just to improve one paragraph. They want a quick fix and to keep working. When writing help is built into typing, more people use it because it takes less effort.

Learning Tools Built Around Your Own Sources

Google also shared updates for NotebookLM, designed to work with your own notes and sources. Features like flashcards and quizzes grounded in uploaded material are meant to support study, training, and structured review.

This approach is different from general web answering. It is closer to assisted learning and assisted internal research. It can be useful for onboarding, training programs, and personal study notes, especially when people need structure and repetition.

It still needs human review when the output will be used in policy, customer communication, legal work, or other high-stakes decisions. The value is speed and structure, not guaranteed correctness.

More Assistant Features Inside the Main App

Google also described the Gemini app as a more central place for AI tasks. That included shareable Gems (custom assistant setups) and Canvas for building simple apps without code. The direction stayed consistent across products: AI features were designed to support tasks in the same environment where users already work.

Coding Tools Moved Toward Longer Tasks

Developer tools also changed in September 2025. Many teams moved past quick code snippets. They wanted help that lasts through a longer work session, the way real software is built and updated.

Codex Updates and Multi-Step Work

OpenAI shared upgrades to Codex, including GPT-5-Codex as the default for cloud tasks and code review, with options to use it locally through a CLI and an IDE extension.

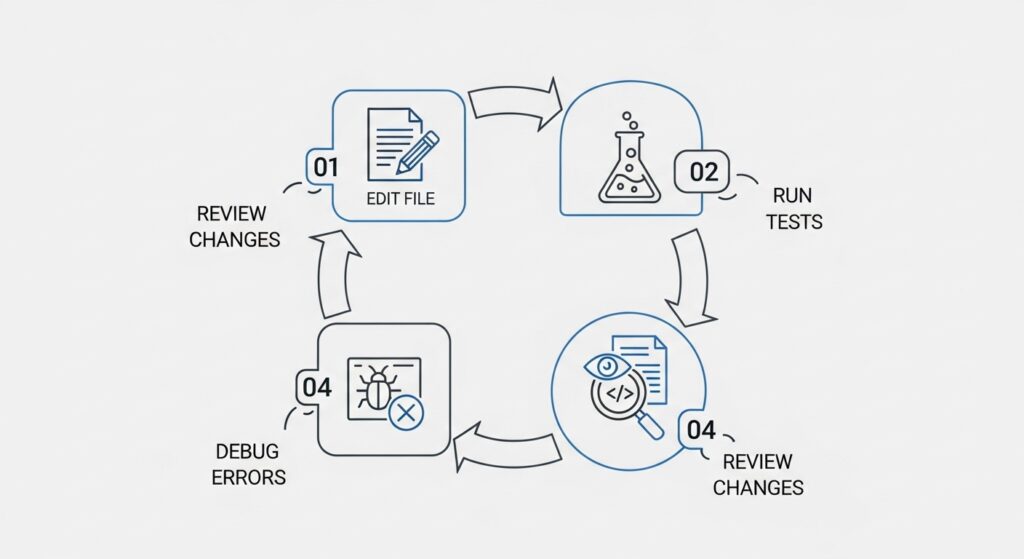

The important part here is the workflow. Real development work often includes editing multiple files, following existing patterns, running tests, and debugging failures. A tool becomes more useful when it supports that full loop, not only the first draft of code.

How Longer Workflows Change Engineering Review

Longer AI coding sessions can help because developers do not need to repeat the same details again and again. The tool keeps the context, so the work is often more consistent across updates.

But longer workflows also have a risk. If the AI makes a small mistake and keeps going without anyone checking, that mistake can end up in many parts of the code. That is why teams still need regular check-ins, tests, code review, and clear limits on what the tool can change.

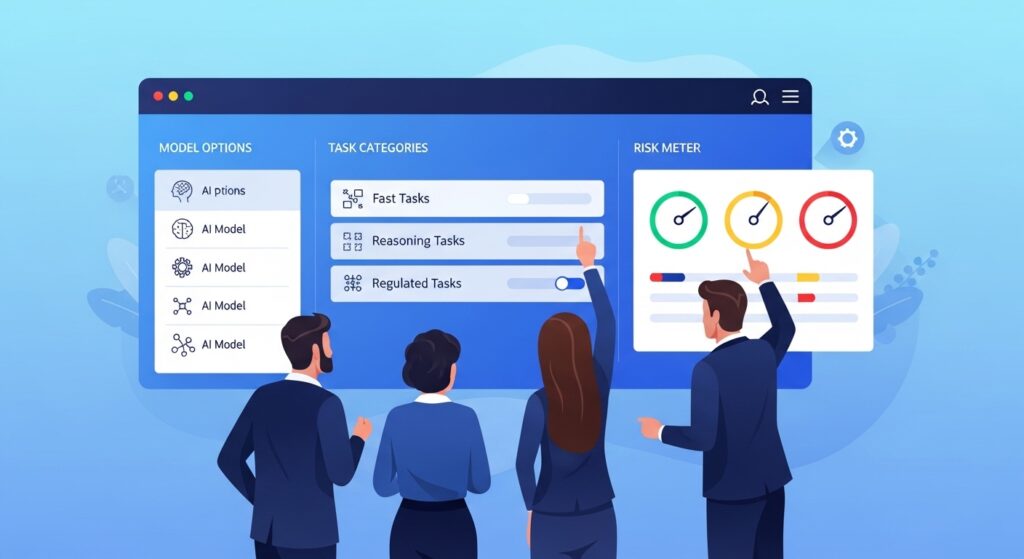

Enterprise Tools Shifted Toward AI Model Choice

In September 2025, many enterprise AI platforms started moving away from using only one default model. Instead, they began offering more flexibility so companies could pick different models for different kinds of work.

Microsoft 365 Copilot and Multi-Model Support

Microsoft said Microsoft 365 Copilot would expand its model options. This included access to Claude Sonnet 4 and Claude Opus 4.1 in features such as Researcher and agent building in Copilot Studio.

This mattered because business tasks are not all the same. Some work needs fast results and lower cost. Other work needs stronger reasoning for complex problems. Some areas also need stricter behavior for sensitive or regulated topics. Model choice helps organizations match the model to the task and the level of risk.

Workplace Use Was Already Rising

Workplace AI use was also rising. The Anthropic Economic Index report for September 2025 cited Gallup survey data showing 40 percent of U.S. employees said they used AI at work, up from 20 percent in 2023.

When AI use becomes that common, companies usually create clearer governance. That includes which tools are allowed, what data can be shared, how usage is tracked, and when a human must review AI output before it is used or shared.

AI Video and Image Tools Became Easier to Use

Several September releases showed AI media moving into products people use casually, not only specialist tools.

Short-Form AI Video Inside Social Apps

Meta introduced Vibes, a feed inside the Meta AI app and on meta.ai for creating, discovering, and remixing short AI-generated videos. The design matters here. When creation tools connect directly to a feed, people learn by example and reuse patterns through remixing, which can make the feature easier to adopt.

This also changes distribution. The content is not only created, it is surfaced in the same loop. That often increases the volume of AI-generated media users see day to day.

Higher-End Video Generation for Consumers

OpenAI released Sora 2, describing improvements in realism and controllability, plus synchronized dialogue and sound effects, with creation happening through a dedicated Sora app.

When video generation becomes easier to access, it becomes a normal format people expect to see. That creates practical issues for platforms and brands, including labeling, brand safety, copyright questions, and internal policies for what can be published and when review is required.

Robots and Physical Agents Took a Step Forward

Robotics also stood out as an Artificial Intelligence breakthrough in September 2025, especially through Google’s updates on DeepMind. Google described work on AI systems that help robots see, plan, and act in physical environments, including multi-step task handling where conditions change.

Physical work is unpredictable. Lighting changes, objects shift, and tasks often require tool use and planning. Progress in this area matters because the cost of mistakes is higher than in digital workflows. If an AI tool makes a wrong suggestion in a document, you can edit it. If a physical robot makes a wrong move, it can break something or hurt someone. That is why robotics progress often increases attention on reliability and safety standards.

Infrastructure Became an Energy and Capacity Issue

In the recent AI news September 2025, infrastructure was not only about chips. It also centered on power, sites, and build speed.

Why Gigawatts Became a Key Metric

NVIDIA and OpenAI announced a strategic partnership tied to deploying at least 10 gigawatts of NVIDIA systems for OpenAI infrastructure, with NVIDIA intending to invest up to $100 billion as those systems are deployed.

A gigawatt is a very large measure of power. When AI infrastructure is described in gigawatts, it means the conversation has moved to real constraints: electricity supply, grid upgrades, permitting, cooling, and how quickly data centers can be built and connected.

Stargate Expansion Plans

OpenAI also described expanding Stargate with partners including Oracle and SoftBank, adding five new AI data center sites as part of a larger buildout. OpenAI said this brings Stargate to nearly 7 gigawatts of planned capacity and over $400 billion in investment over the next three years.

Even if timelines shift, the point is clear. AI growth at this scale is now tied to long-term infrastructure planning, not just software releases.

A Real Sign of Demand in the U.S.

Infrastructure demand also showed up in real spending. Bank of America Institute reported U.S. data center construction spending hit a record $40 billion seasonally adjusted annual rate in June 2025, up about 30% from the prior year.

This helps explain why September AI coverage leaned so heavily toward infrastructure. Many companies were not only talking about compute needs. They were already spending at record levels to meet them.

Model Releases and Global Competition Stayed Strong

Large model development continued outside the biggest U.S. labs, and funding activity showed long-term regional goals.

Alibaba’s Qwen Release

Alibaba’s Qwen team described Qwen3-Max as a model with over one trillion parameters, positioned for areas like coding and agent-style work.

The practical takeaway is that competitive models and serious engineering work are not limited to one region. For teams evaluating AI platforms, it also means the menu of options keeps expanding, which affects procurement, cost comparisons, and long-term vendor planning.

Europe’s Investment Signal

ASML announced a strategic partnership with Mistral AI. It also said it invested €1.3 billion in Mistral’s Series C round, which equals about an 11% stake on a fully diluted basis.

Deals like this usually aim for more than profit. They show long-term interest in AI skills, product strength, and control over key parts of the supply chain in Europe.

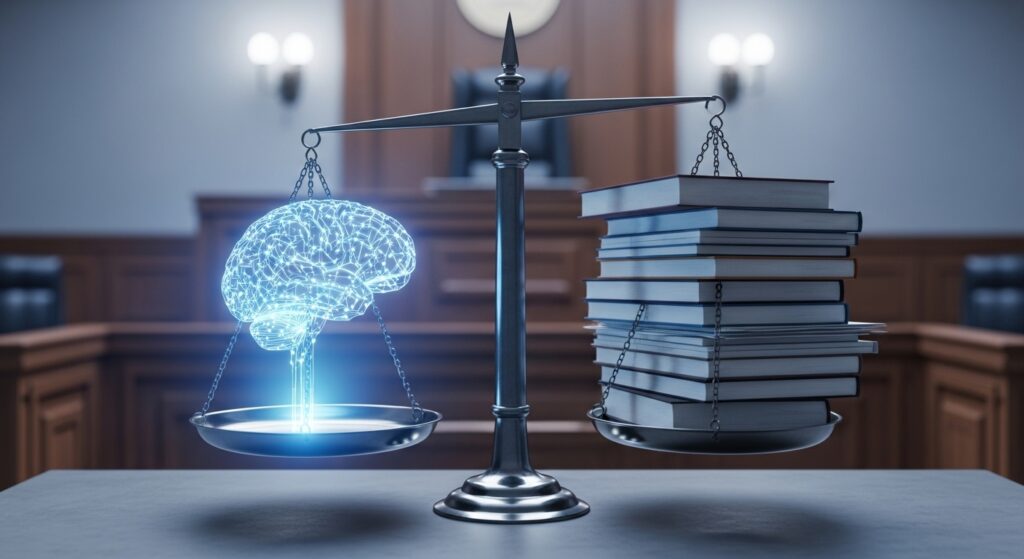

AI Rules, Lawsuits, and Safety Checks Became More Important

In September 2025, legal and policy topics became part of the main AI story. They affected how teams thought about risk, training data, and use of published content.

State-Level Safety Disclosures

California’s SB 53 moved forward during the month. It focused on safety disclosures and whistleblower protections for teams building powerful AI models.

State rules can affect more than one state. Large vendors may adjust contracts and product terms to meet these rules. That can also shape internal review and risk steps, even before federal rules are clear.

These changes were not limited to the U.S. For example, Japan’s updated AI policy direction also showed how governments are tightening oversight. You can read a detailed breakdown in our coverage of Japan AI regulation news 2025.

Copyright Cases With Real Cost Impact

Anthropic faced a reported $1.5 billion settlement tied to claims that pirated books were used in training. A judge gave preliminary approval later in September.

Apple also faced a lawsuit from authors with similar claims about book use in AI training.

For businesses, the key point is simple. Training data choices can create legal and cost risk. That risk can also affect customers through vendors, tools, and contracts.

Publisher Disputes Over AI Summaries

Penske Media sued Google over AI summaries in search. The claim was that summaries use publisher reporting while reducing site visits.

This matters because it is about money and traffic. If AI answers reduce clicks, publishers will ask for clear permission, credit, and payment. That can shape how AI search features work over time.

Safety Research on Misuse and Deception

OpenAI published research on how to spot and reduce deceptive behavior in advanced models. The work was done with Apollo Research.

This matters more as AI tools take on longer tasks. When a system can take many steps, mistakes can cause more harm. That risk is higher in areas like finance, security, and automated work tools.

What These AI Updates Meant for Teams

For most organizations, September 2025 did not require a full reset. It did make several decisions harder to avoid.

In AI news September 2025, AI features became easier to access inside core products, which raised basic questions. Where can AI act on its own, and where must a person approve the output? What data can a tool access, and what data must stay out? Which model is allowed for which task?

Many teams made progress by treating AI adoption like any other system change:

- Define which tasks need human approval before anything goes out.

- Set rules for what data can be shared with outside tools.

- Choose models based on risk and cost, not only output quality.

- Plan for capacity limits, since power and data centers can slow delivery.

Final Thoughts

September 2025 confirmed that AI is now part of everyday software work, and this recap of the latest AI news September 2025 explains why. AI is built into tools people already use, so it’s easier to adopt. But mistakes can spread faster too, because AI output moves quickly.

The teams that managed this well kept it simple. They set clear limits, required human review in key places, and protected sensitive data. In practice, these controls mattered more than chasing every new feature.

If you want to see what happened next in the AI industry, read our detailed update on Latest AI News October 2025, where we cover major product launches, research breakthroughs, and global AI policy changes.

FAQs

What was the biggest change in September 2025 AI updates?

AI was added directly into tools people already use, like browsers, search, writing apps, and coding tools. It felt more like a built-in feature than a separate chat app.

What does multimodal AI mean in simple terms?

It means AI can work with more than just text. It can also understand images, and sometimes voice.

Why did AI infrastructure news focus on gigawatts?

Because running AI needs a lot of electricity. Gigawatts show how much power large AI data centers can use, so power supply and building capacity became major limits.

Why are lawsuits about training data important for businesses?

They can create legal and financial risk. Even if a company does not train AI models, the tools it uses might. That is why businesses care more about contracts, audits, and data rules.

How should a company decide which AI tools to allow?

Start small with a few useful tasks. Set clear rules for review and approvals. Do not share sensitive data until controls are in place. Expand only when it is working safely.

Comments are closed