Most video calls break when too many people join. Some systems, though, can handle dozens or even hundreds smoothly. The difference comes down to how the video streams are managed. Technologies like Multipoint Control Units (MCUs) and Selective Forwarding Units (SFUs) help make real-time communication happen. WebRTC also plays a key role in enabling browser-based real-time communication. These tools work behind the scenes for smooth connections.

Most people don’t know what a multipoint control unit does. They also don’t understand why video conferencing needs one. In this guide, you’ll discover what a multipoint control unit (MCU) is. You’ll see how it works, how it compares to an SFU, and its role in modern WebRTC video systems.

Let’s break it down step by step.

What is a Multipoint Control Unit (MCU)?

Multipoint Control Unit Definition and Core Purpose

A multipoint control unit (MCU) is a centralized system that connects multiple participants in a video conference by processing and distributing audio and video streams into a single unified output.

Think of it as the main hub for organizing audio and video communication across various locations. MCUs can be hardware or software. They manage and control multimedia streams in video calls.

The multipoint control unit plays a key role in video conferencing. It focuses on keeping data safe, ensuring stability, and managing video and sound quality. Additionally, it adds features that make video conferencing a useful business tool.

The server handles tasks like switching audio and video streams. It also works with user devices and software. It connects to an H.323 gatekeeper to handle calls.

An MCU server has two main parts: the Multipoint Controller (MC) and Multipoint Processors (MP). The MC handles the processing of media streams between endpoints. The MPs focus on processing those streams. This includes tasks like mixing and switching multimedia data.

The Evolution From Point-To-Point To Multipoint Conferencing

Before people used MCUs, video calls allowed two locations to connect at once. This made group collaboration impossible. MCUs made group video calls possible. They introduced mixing technology for multipoint conferencing.

The system combines audio, video, and data streams from each participant into one feed. This single feed is shared with everyone on the call. It makes group conversations easier. MCU systems also link with tools like gatekeepers and gateways. This boosts their capabilities.

MCUs do more than link calls. They handle tasks like mixing, transcoding, and transrating. Mixing lets users pick video layouts that suit everyone in the meeting.

Transcoding changes the video stream format, and transrating adjusts the data transfer rate. The system takes on these tasks. This lightens the workload for user devices, so they do fewer calculations.

Hardware MCU vs Software MCU

For a long time, hardware MCUs were the only real choice people had. These were computing systems based on RISC running Unix-like operating systems.

Vendors controlled the architecture. This made them close off. They often did not work with other devices. These servers were hardware, but they depended on software locked to a specific system.

This closed design put strong limits on what users could do. New features depended on how often the vendor updated the hardware and software.

Old MCUs worked with external devices through traditional protocols like SIP and H.323. This created restrictions. For example, without cascading, only 25 participants can join a conference at once.

Software MCUs grew popular as new technology let more developers join the competition. Better computing power from regular system setups means software can run on any x86 server. It can even work on a typical PC. This change lets people set up a working multipoint control unit, either in the cloud or on-site. They only need to pay for software licenses.

Software MCUs bring a lot of features to make video conferencing more effective. They also enhance cybersecurity by installing the latest patches.

You don’t need to spend on extra hardware, which helps reduce expenses. Now, software-based video transcoding servers make video conferencing affordable. They transform it into an easy-to-access tool for many people.

Enterprise solutions like the Cisco Multipoint Control Unit (Cisco MCU) have been widely used in traditional video conferencing environments. Cisco MCUs are very reliable.

They offer advanced media processing. They also integrate easily with enterprise communication systems. But they often required a lot of investment. Also, they are slowly being replaced by cloud-based and WebRTC-friendly solutions.

How A Multipoint Control Unit Works In Video Conferencing

A multipoint control unit (MCU) is crucial for video conferencing. Understanding its operation shows why systems rely on this technology.

The MCU acts as a central hub. It receives, processes, and redistributes media streams to all connected participants.

Media Stream Processing Pipeline

The processing pipeline follows a specific sequence of operations. The multipoint control unit receives encrypted streams from all participants.

Then, it decrypts these streams to access the raw data. Next is decoding. This step changes compressed media into a raw format that can be edited.

The system then mixes and composes multiple streams into one coherent output. This composite stream is encoded to make it smaller for easier transmission. It is also encrypted for security and sent to all participants.

Real-time processing algorithms manage compression, decompression, transcoding, and format conversion all at once. Buffering, synchronization, and quality of service help keep performance high for all conference participants. This is true even when network conditions change.

Audio Mixing And Video Composition

Audio mixing involves sophisticated processing that handles multiple challenges at once. The system adjusts audio levels from various sources.

This stops some participants from being too loud or too quiet. It reduces noise and cancels echoes to boost audio quality. Another key function includes automatic gain control. Voice activity detection identifies who is speaking at any given moment.

Video composition creates layouts displaying multiple participants simultaneously. The server creates different display setups. It can switch based on the active speaker. This happens automatically.

Transcoding capabilities enable format conversion between different codecs and resolutions. This makes sure different endpoints can work together. It also optimizes bandwidth for various network conditions.

Processing is optimized per user rather than per conference. Each participant uses unique audio and video settings. This helps them get the best quality based on their device and network.

Bandwidth Management And Quality Optimization

MCUs use smart resource management systems to make the best use of bandwidth. They use dynamic allocation systems. These systems check live traffic conditions.

Then, they adjust how bandwidth is shared among devices. This is based on needs and priorities. These systems use allocation methods. They consider data type, connection strength, and user needs.

Adaptive video quality control adjusts resolution, frame rates, and audio quality. It does this based on network resources and endpoint capabilities. The systems use adaptive algorithms.

These help with congestion control, packet loss recovery, and quality adjustment. When packet loss goes beyond certain limits, forward error correction kicks in. This helps keep connections stable.

Systems that focus on traffic help important data streams get the bandwidth they need. They categorize audio, video, and data sharing, giving each a specific priority level. When bandwidth is low, the system uses specific policies.

These policies help manage resources and keep important communications going. Algorithms analyze past data and trends to predict future resource needs.

Multipoint Control Unit in WebRTC Video Conferencing

WebRTC (Web Real-Time Communication) drives many video conferencing platforms. It allows real-time audio and video in browsers and mobile apps. In a pure WebRTC setup, users connect peer-to-peer. This works well for small calls but becomes inefficient as more participants join.

A multipoint control unit in WebRTC acts as a central media server. It receives streams from all users, processes them, and sends back one combined stream.

This reduces device load and ensures consistent quality across participants. Many WebRTC systems choose SFUs for scalability. MCUs are still used for stream mixing, recording, and legacy compatibility.

Types of MCUs for Video Conferencing

Multipoint control unit implementations vary based on deployment approach and organizational requirements. Selecting the right type depends on meeting size, feature needs, and budget constraints.

Hardware-Based MCUs

Hardware-based MCUs are real devices. They work well for big organizations. These groups often hold video conferences.

These physical devices deliver robust performance and can support many participants simultaneously. The dedicated hardware architecture provides reliability for mission-critical communications.

The main drawback involves significant initial investment and ongoing maintenance costs. Organizations need to budget for both the purchase of equipment and regular upkeep.

Hardware MCUs are suitable when you need reliable performance and can invest upfront.

Software MCUs

Software MCUs operate on servers, acting as a digital counterpart to hardware units. They operate on standard x86 servers or virtual machines.

This means you don’t need special equipment. This approach gives you more flexibility. You can use your existing server resources to deploy them.

Cost-effectiveness stands out as a primary advantage. You pay for software licenses rather than expensive dedicated hardware.

Software MCUs are good for small businesses or groups with changing needs. They are easy to use. But they may not scale well. They also lack the power of dedicated hardware solutions.

Cloud-Based MCUs

Cloud-based MCUs utilize cloud computing resources to facilitate video conferencing. They offer excellent scalability since resources adjust dynamically to meet demands.

The pay-as-you-go model lets you pay only for what you use. This way, you avoid paying for unused capacity.

Huawei’s CloudMCU shows this model well. It supports virtual or cloud-based deployment and offers flexible scalability. The platform allows one-key deployment, remote inspection, and easy upgrades.

This makes operation and maintenance simple. Cloud MCUs require a stable internet connection to function properly. Some organizations worry about security when handling sensitive communications in the cloud.

Hybrid MCU Solutions

Hybrid MCUs bring together the benefits of hardware and software methods. They provide the ability to adapt and grow according to different requirements.

This approach finds a balance between effectiveness and expenses.

Companies can rely on dedicated hardware for their main capacity. They can also use software or cloud resources for extra support.

During busy times, this setup helps them meet demand. It avoids the risk of overcommitting fixed resources. Another benefit is that it helps organizations transition gradually from old hardware to newer, software-based systems.

Why Video Conferencing Needs a Multipoint Control Unit

MCU technology has been the backbone of large-group conferencing systems for many years. The architecture offers key benefits that are crucial for some video conferencing situations.

Enabling Multi-Party Video Calls At Scale

The MCU links all users into a single network. It can manage several conferences at once. Cascading capabilities let you combine two or more conferences.

This creates larger sessions with many more participants. This distributed environment reduces the drain on network resources in a big way. The bandwidth required across a cascaded conference link equals only one audio and video stream between conferences, far less than the accumulated bandwidth of all participants.

Reducing Bandwidth And Device Requirements

A teleconference with 10 participants can use more than 50 Mbps for one endpoint. A single high-definition stream takes up over 5 Mbps.

MCUs fix this issue by moving heavy stream processing from client devices to a central server. Instead of processing multiple streams, devices decode and render one stream.

Advanced setups maintain a steady bandwidth of 1.5 Mbps, regardless of the number of participants.

Unified Viewing Experience Across Devices

The centralized server ensures a consistent viewing experience for all participants. It provides a single combined video stream.

This reduces decoding needs and lowers bandwidth usage. The single-stream method simplifies client-side integration. It makes front-end development easier while moving complexity to the back-end.

Integration with Existing Systems

One of the greatest benefits has to do with the ease of integration with external legacy business systems. The architecture combines all incoming streams into a single easy-to-consume outgoing stream.

This composite output makes integration simpler with services beyond standard protocols. Organizations that have hybrid environments can use this mediation capability. It helps older endpoints communicate with newer devices.

Centralizing Control and Management

Conference sessions are managed, configured, and modified through intuitive, web-based interfaces. This offers easy, high-level conference control and administrative flexibility to enhance user experiences. Centralized processing creates ready-made layouts. Additionally, it adjusts streams before sending them to clients.

Supporting Legacy Devices and Systems

MCUs support devices that can both send and receive video streams. They also work with devices that can only receive video.

Terminals without video cameras join as voice participants. They can still see other participants. This helps older hardware with limited processing power. It also benefits devices that don’t support modern codecs.

Simplifying the Conference Experience

The architecture keeps quality high, even on low-powered devices or weak networks. It does this by offloading processing tasks to a central server.

MCUs manage jitter better. They handle one composite stream for each client. This centralized mixing makes it easier to reduce jitter compensation complexity.

How Platforms Like Zoom Use MCU and SFU

Modern platforms like Zoom, Microsoft Teams, and Google Meet use a mix of MCU and SFU architectures. Zoom typically relies on SFU for better scalability and low latency. But for certain tasks, like cloud recording or older integrations, it switches to MCU-like processing.

This helps produce a single combined stream. This hybrid approach helps platforms balance performance and cost. It also improves user experience for different use cases.

MCU Challenges and Modern Alternatives

Limitations of MCU Architecture

Despite major technological progress, multipoint control unit architecture encounters serious challenges in tough communication settings.

Scalability is a critical concern for large conferences with numerous participants. Real-time transcoding and protocol translation demand significant processing power. This causes bottlenecks that restrict system capacity and increase latency.

Processing latency introduces delays typically ranging from 100 to 300 ms. Any errors in mixing can affect all participants concurrently. The centralized processing model creates a single point of failure.

Server Resource Demands And Costs

Transcoding many audio and video streams into one media stream is very CPU-intensive. Encoding it at different resolutions in real time adds to the load.

The more clients connect to the server, the higher its CPU requirements. Multipoint control unit costs are 10-50 times higher than SFU for equivalent participant loads.

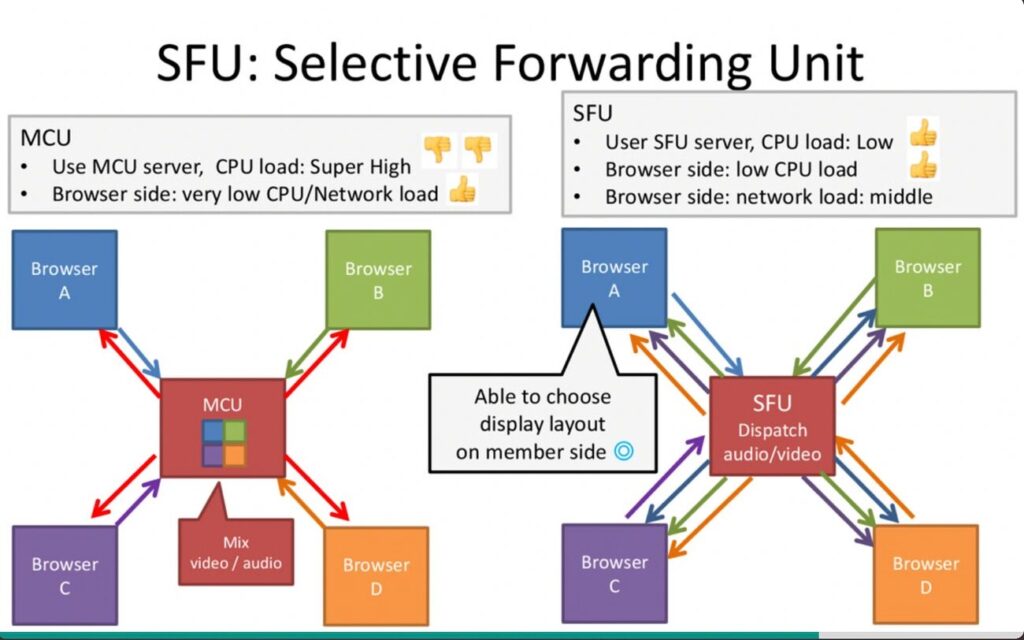

SFU Vs MCU: Understanding The Differences

The main difference between MCU and SFU lies in media stream handling. MCUs send one combined stream to everyone. SFUs, on the other hand, forward specific streams without mixing them.

SFUs provide better scalability and lower latency since they skip encoding and decoding. They are also cost-effective as they run on standard servers.

Many modern WebRTC applications prefer SFUs. They offer lower latency and better scalability. MCUs are still the go-to choice.

They work best for a single composite stream. They also make recording simple. Plus, they fit well with legacy systems.

| Feature | MCU | SFU |

| Processing | Centralized (mixing) | Forwarding |

| Latency | Higher | Lower |

| Scalability | Limited | High |

| Cost | Expensive | Cost-effective |

| Best For | Recording, legacy systems | Large-scale WebRTC apps |

When To Choose MCU Over Alternatives

A multipoint control unit is perfect for meetings with 10 or more participants. Video quality remains high, no matter how many people join.

Use the system for single composite streams. It is great for recording, legacy integration, or clients with limited bandwidth.

Conclusion

MCUs are essential for large video conferences. They help maintain quality when 10 or more participants are involved. Software and cloud solutions have replaced costly hardware MCUs. The core technology still offers benefits that newer options cannot match.

SFU architectures offer better scalability and lower costs for many scenarios. MCUs are perfect for legacy device support. They also work well in low-bandwidth settings. Plus, they can record single composite streams.

There is no one-size-fits-all solution in video conferencing. MCUs, SFUs, and WebRTC each solve different problems. If you need simplicity, recording, and legacy support, an MCU is still a powerful choice.

If you need scale and speed, an SFU is often the better option. The best systems today blend all three. They offer performance, flexibility, and reliability, even at scale.

FAQs

What does multipoint video conferencing mean?

Multipoint video conferencing allows three or more people from different places to connect and talk at the same time. It goes beyond regular one-on-one calls. This technology supports modern business collaboration needs across distributed teams and remote workers.

How does a multipoint control unit function in a video conferencing system?

A Multipoint Control Unit is a central hub. It receives audio and video streams from all participants. It processes and mixes these into one combined stream. It then sends this single stream back to everyone in the conference. This centralized processing reduces bandwidth needs and ensures consistent quality across all devices.

What advantages does video conferencing provide compared to audio meetings?

Video conferencing helps people remember and understand better. This happens because we process visual information more quickly and more than sound alone. Participants can see facial expressions and body language. They also share visual content. This helps communication and improves meeting outcomes.

When should I choose a multipoint control unit over alternative architectures like SFU?

MCUs work best for conferences with 10 or more participants. They ensure good video quality, no matter how many people join. They help support old devices. They work well with clients who have slow connections. They also record single composite streams. Plus, they can integrate with older business systems.

What are the main limitations of the multipoint control unit architecture?

MCU systems face several challenges. First, they require substantial computing power, which makes them expensive to run. Second, there is processing latency, which ranges from 100 to 300 ms. Third, they face difficulties with scalability in large conferences. All processing is centralized, which creates a risk of a single point of failure.

What is a Multipoint Control Unit (MCU)?

A multipoint control unit (MCU) is a central server. It processes, mixes, and distributes audio and video streams during a video conference. Then sends one combined stream to all participants.

Comments are closed