Quantum computing is no longer just an idea in research papers. In 2024, real machines, cloud platforms and new experiments showed clear quantum computing progress in how we build and use these systems. At the same time, there are still major limits, such as noise, scale and cost, that stop the technology from reaching its full potential.

Here We explains the latest breakthroughs in quantum computing 2024, what those breakthroughs mean in practice, and which problems are still slowing quantum computing down.

Quantum Computing 2024 in Simple Terms

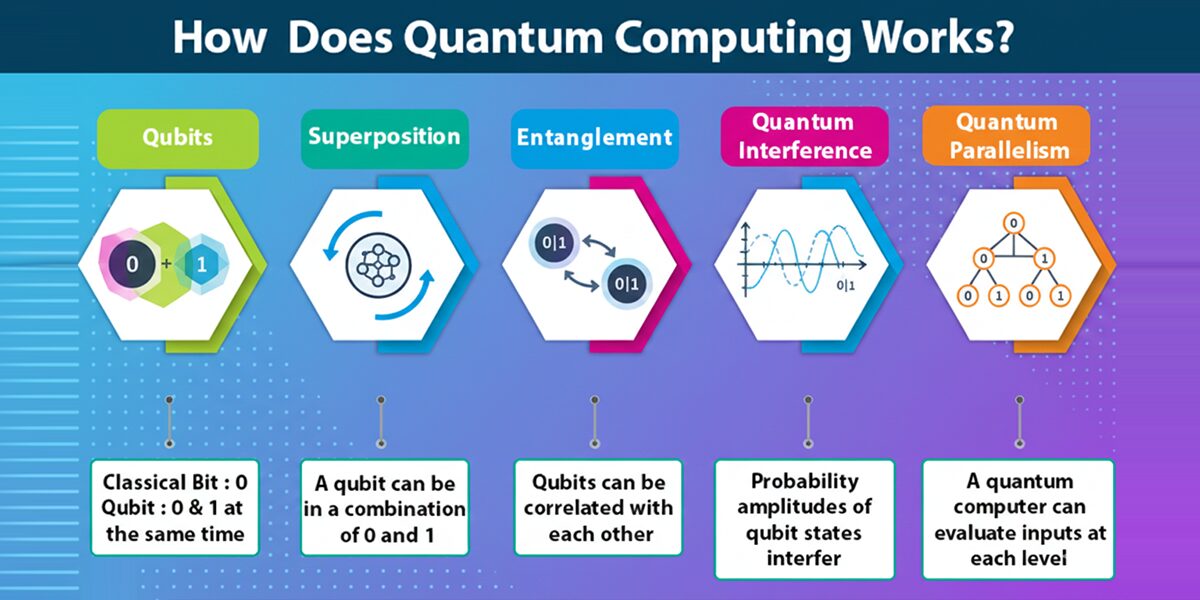

Quantum computing is a different way of processing information that uses qubits instead of normal bits.

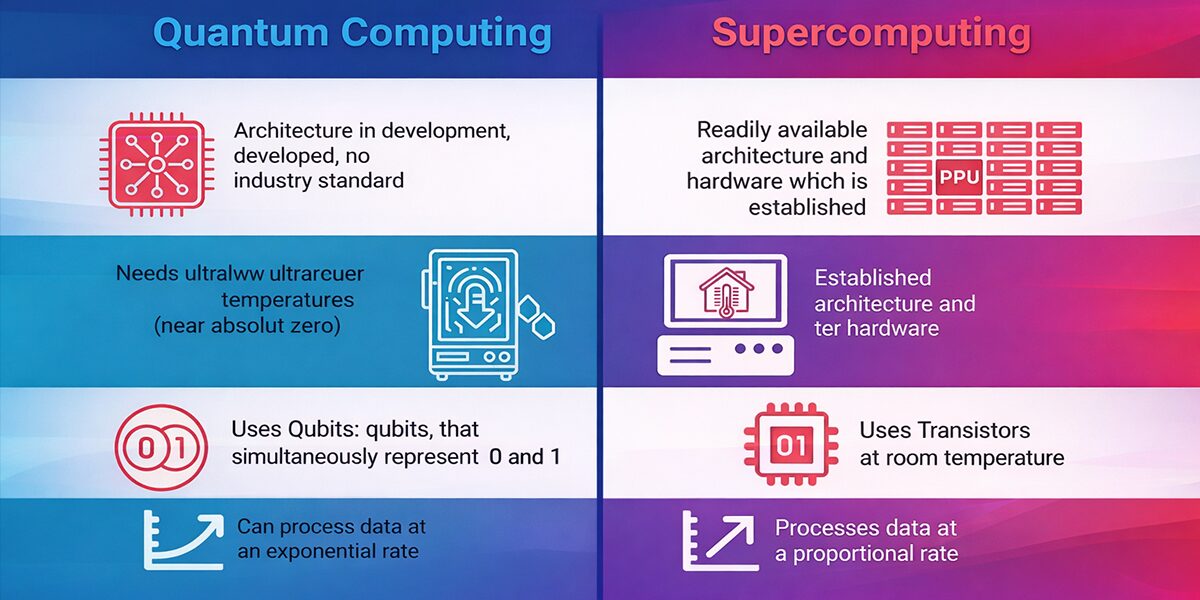

A normal bit is either 0 or 1. A qubit can be 0, 1 or a mix of both at the same time. Groups of qubits can also be linked in a special way called entanglement. Because of this, a quantum computer can explore many possibilities at once. For some types of problems, this can be much faster than any classical supercomputer.

Inside a quantum computer, three ideas matter most:

- Physical qubits are the actual hardware units on the chip.

- Logical qubits are more reliable units built from several physical qubits using error correction.

- A fault-tolerant quantum computer would use logical qubits well enough to run long programs without errors taking over.

So, is quantum computing real today? Yes. Companies already run devices with tens to a few hundred qubits, often available through the cloud. However, most current systems are in what researchers call the noisy intermediate-scale quantum (NISQ) era, a term for today’s devices with tens to a few hundred noisy qubits. They are useful for narrow tasks and experiments, but they cannot yet run large general-purpose algorithms, such as breaking standard encryption.

When people talk about quantum computing in 2024, they are usually referring to three things at the same time: better qubits, more practical algorithms and a stronger ecosystem around the hardware.

Breakthrough 1: Error Correction and More Reliable Qubits

Google’s Willow Chip: Making Qubits Less Noisy

Noise is one of the biggest problems with quantum computing today. Qubits lose their information quickly, and small errors build up until the final result cannot be trusted. Error-correction schemes try to fix this by combining several physical qubits into a single logical qubit that can survive longer.

In December 2024, Google Quantum AI introduced Willow, a 105-qubit chip that achieved below-threshold quantum error correction. The team arranged qubits in grids such as 3×3, 5×5 and 7×7 (different code distances in a surface code). When they increased the grid size, the error rate of the logical qubit went down instead of up. In other words, adding more qubits made the stored information safer, not weaker.

This result matters because it shows that:

- scaling a quantum processor can actually reduce errors

- encoded qubits can keep information longer than single physical qubits

- large fault-tolerant machines are technically possible if we can reach enough scale

Willow also ran a demanding test called random circuit sampling. This test would take an enormous amount of time on a top classical supercomputer but finished in minutes on the chip.

It does not solve a direct business problem, but it is strong evidence that quantum hardware can outperform classical machines on certain carefully chosen tasks.

Topological Qubit Breakthrough

Another important 2024 result came from Quantinuum together with researchers at Harvard and Caltech. They reported one of the first convincing experimental realizations of a topological qubit on their H2 trapped-ion system.

In simple terms:

- Atopological qubit stores information in global patterns of the system, not just at a single point

- Small local disturbances are less likely to change that information

- This gives the qubit built-in resistance to certain kinds of error

The team used three-level systems called qutrits and carried out operations that matched long-standing theoretical ideas about topological quantum computing. The experiment was still small, but it supports the idea that future topological designs could encode logical qubits using fewer physical resources than today’s surface-code approaches.

That would make large-scale machines easier and cheaper to build and marks one of the most meaningful breakthroughs of the year.

Breakthrough 2: Algorithms and Applications Move Closer to Reality

Better qubits only matter if we can use them on real problems. In 2024, many research groups focused on hybrid approaches that mix classical computing, AI and quantum hardware so each part handles the work it does best.

Chemistry and Materials

One of the most active areas was chemistry and materials science.

Microsoft’s Azure Quantum Elements platform combined AI, high-performance computing and quantum techniques to study complex reaction networks. In one project, scientists ran more than a million advanced chemistry calculations and used quantum tools to reach very accurate energy estimates.

At the same time, a collaboration led by Pasqal used neutral-atom processors to study how water molecules arrange themselves inside tiny pockets in proteins. This detail strongly affects how drugs bind to their targets and is very hard to model with classical methods alone.

The message is simple: in a few carefully chosen cases, quantum tools are starting to help with simulations that are extremely costly on traditional machines. This is where some of the first practical quantum computing advances are appearing.

AI and Machine Learning

Quantum ideas also appeared in AI and machine learning. Instead of trying to replace classical models, researchers looked for ways to support them.

Quantinuum developed a quantum-based natural language model that uses circuits to represent sentence structure while still being trainable and understandable.

Terra Quantum built a hybrid quantum neural network for classifying liver images using only a handful of qubits in a federated learning setup, so patient data stayed inside each hospital.

These projects are early, but they show that small quantum models can work alongside existing AI systems, especially in sensitive settings where privacy and clarity matter.

Physics, Engineering and Simulation

Quantum computers are also starting to act as scientific tools.

In 2024, teams used real hardware to explore:

- Plasmas, in work by Riverlane and MIT related to fusion energy and other high-temperature systems

- Fluid flow, in BQP’s quantum-assisted simulations of simplified jet engine models

- Ideas from cosmology and quantum field theory, where quantum devices were used to test behaviors that are hard to study in any other way

These demonstrations are small in scale, but they show how quantum computing can support scientists as part of larger simulation workflows rather than replacing classical systems.

Breakthrough 3: Industry-Scale Chips, Cloud Access and Investment

Stronger Processors and Cloud Platforms

The year was not only about lab experiments. It was also about making hardware more useful in practice.

Quantinuum’s H2-1 trapped-ion system reached 56 fully connected qubits with very high gate quality. At the same time, companies such as IBM, Google, Microsoft, Amazon and IonQ expanded their quantum cloud services. This lets researchers and businesses run experiments on different types of quantum devices without owning the equipment.

The key shift in 2024 was:

- Fewer headlines about raw qubit counts

- More focus on quality, stability and error correction

This change is essential if quantum computers are going to support real workloads instead of remaining purely experimental.

Funding and Market Growth

On the business side, investment in the field kept rising. Startups working on hardware, software and components together raised several billion dollars in 2024. Governments launched and expanded national programs worth tens of billions over the coming years. Direct revenue from quantum computing is still small, but many forecasts expect it to grow as the ecosystem matures.

This long-term commitment is important because large, reliable quantum systems require:

- years of engineering to move from one-off demos to stable products

- specialized teams across physics, engineering and software

- major infrastructure, from fabrication to cryogenic cooling and control electronics

The money flowing into the field is a sign that many stakeholders see today’s progress as real, even if the big payoffs are still in the future.

Main Quantum Computing Challenges After 2024

Even after a year full of results, quantum computing is still in its early days. Several deep challenges of quantum computing remain and shape what comes next.

1. Scaling Up to Large Systems

The most powerful algorithms are expected to need thousands of logical qubits and possibly millions of physical ones. Current chips like Willow and H2 are important steps, but they are still far from that scale. Each logical qubit usually needs many physical qubits, and only a small number can be run at once.

Scaling from dozens or hundreds of qubits to millions is one of the hardest engineering tasks in modern technology and remains a central problem for the field.

2. Noise and Engineering Complexity

Qubits are extremely fragile. Superconducting devices must operate close to absolute zero and lose their energy in microseconds. Tiny disturbances from the environment, control electronics or fabrication defects can spoil a calculation.

Large systems therefore need:

- Complex cooling equipment

- Precise control hardware, often using lasers or microwaves

- Careful layout to reduce interference and crosstalk between qubits

Coordinating, calibrating and stabilizing many interacting qubits at once is a major technical challenge and one of the main reasons quantum computers are hard to build.

3. Limits of Algorithms and Verification

On the software side, there are also important limits.

Only a few classes of problems are known to benefit clearly from quantum speedups, such as factoring, some search tasks and certain physics simulations. Many proposed optimization and machine learning methods are still experimental, and it is not always clear when they will beat the best classical algorithms.

Checking the output of a large quantum computation is also difficult when the system is too large to simulate directly. This raises new questions about reliability and trust.

4. Security and Encryption

Security is one of the most widely discussed topics around quantum computing. In theory, a large fault-tolerant quantum computer could run Shor’s algorithm and break common public-key systems such as RSA and elliptic-curve schemes. No such machine exists today, but encrypted data stored now could be at risk in the future.

To prepare for this, many organizations are starting to adopt post-quantum cryptography (PQC), which uses new mathematical problems that are believed to be safe even if powerful quantum machines become available.

5. Skills, Cost and Access

Finally, there are human and economic issues.

The field requires people who understand physics, engineering, computer science and mathematics at a deep level, and that mix of skills is rare. Building and operating quantum hardware is also expensive.

For now, most users work with this technology through shared cloud platforms and research partnerships instead of owning their own systems. That is one reason most projects are still pilots rather than large-scale deployments.

Emerging Real-World Use Cases From 2024

Even if fully fault-tolerant machines are still in the future, work in 2024 gives a clearer picture of where early value may appear.

1. Drug Discovery and Health

Researchers are using quantum-inspired methods and early devices to:

- study how molecules react and bind in complex environments

- model how water and other solvents surround proteins

- support medical decisions, such as transplant matching, with hybrid quantum AI models

The goal is to make research faster and more precise so that promising ideas can move from the lab to real treatments more quickly.

2. Materials, Energy and Climate

In materials and energy research, teams are testing quantum tools to:

- design better batteries and catalysts

- improve key industrial reactions with lower emissions

- model plasmas for fusion energy

- explore advanced fluid and climate models that strain classical supercomputers

More accurate simulations could lead to better designs, cleaner processes and new materials.

3. Finance, Logistics and Optimization

In finance and logistics, experiments focus on:

- portfolio design and risk analysis

- routing, scheduling and matching problems

Most of these studies still run on simulators or modest hardware, but they help organizations learn how quantum optimization might fit into existing decision-making systems once the technology matures.

4. AI and Data Analytics

Research groups are building quantum routines for tasks such as matrix multiplication, eigenvalue estimation and dimensionality reduction. Over time, these building blocks could sit inside larger AI and analytics pipelines, with classical hardware doing most of the work and quantum accelerators handling specific sub-tasks.

What To Expect After the Breakthroughs of 2024

Taken together, the latest quantum computing breakthroughs in 2024 give a balanced picture of where the field stands.

Today, the landscape looks like this:

- hardware in the NISQ regime with up to a few hundred physical qubits

- clear demonstrations of quantum advantage on selected benchmarks

- real pilot projects in chemistry, materials, AI and physics

Over the next few years, it is reasonable to expect:

- more and better logical qubits with lower error rates

- wider use of hybrid quantum-classical workflows in sectors such as chemicals, life sciences and finance

- continued rollout of quantum-safe cryptography and early quantum communication networks

Looking further into the late 2020s and 2030s, many experts anticipate:

- the first fault-tolerant machines able to run long programs on tens or hundreds of logical qubits

- clear advantages on real industrial problems rather than just test cases

- deeper integration of quantum processors into cloud and AI systems as specialized accelerators

Quantum computing is not a magic shortcut that arrives overnight; current quantum computer problems around noise, scale and cost mean progress is gradual. The story of 2024 is one of steady progress, where better qubits, smarter codes, more useful algorithms and a growing ecosystem slowly turn a fragile concept into a practical technology.

FAQ: Latest Breakthroughs in Quantum Computing 2024

Is quantum computing real in practice?

Yes. Quantum devices are already used in research, education and pilot projects through cloud access, but they are not yet ready for broad everyday workloads.

What changed most in 2024?

The main shift was from simply adding more qubits to improving quality, error correction and practical use cases in areas such as chemistry, materials science and AI.

Are quantum computers close to breaking common encryption?

No. Current machines are far too small and noisy. The risk is long term, which is why work on quantum-safe cryptography has started early.

What are the main challenges now?

The hardest quantum computing problems involve scaling to many stable logical qubits, controlling noise in complex hardware and proving clear advantages over strong classical algorithms.

Which areas are likely to benefit first?

Early benefits are most likely in chemistry, new materials, selected optimization tasks and specialized data analysis, where today’s algorithms already match the strengths of early quantum hardware.

Comments are closed